The AI Treadmill: Why Keeping Up Is the Real Engineering Challenge

Three years in generative AI feels like a decade anywhere else. Since late 2022, when ChatGPT made large language models impossible to ignore, we have been fully immersed in applying this technology to software engineering. The pace has been relentless.

The evolution no one warns you about

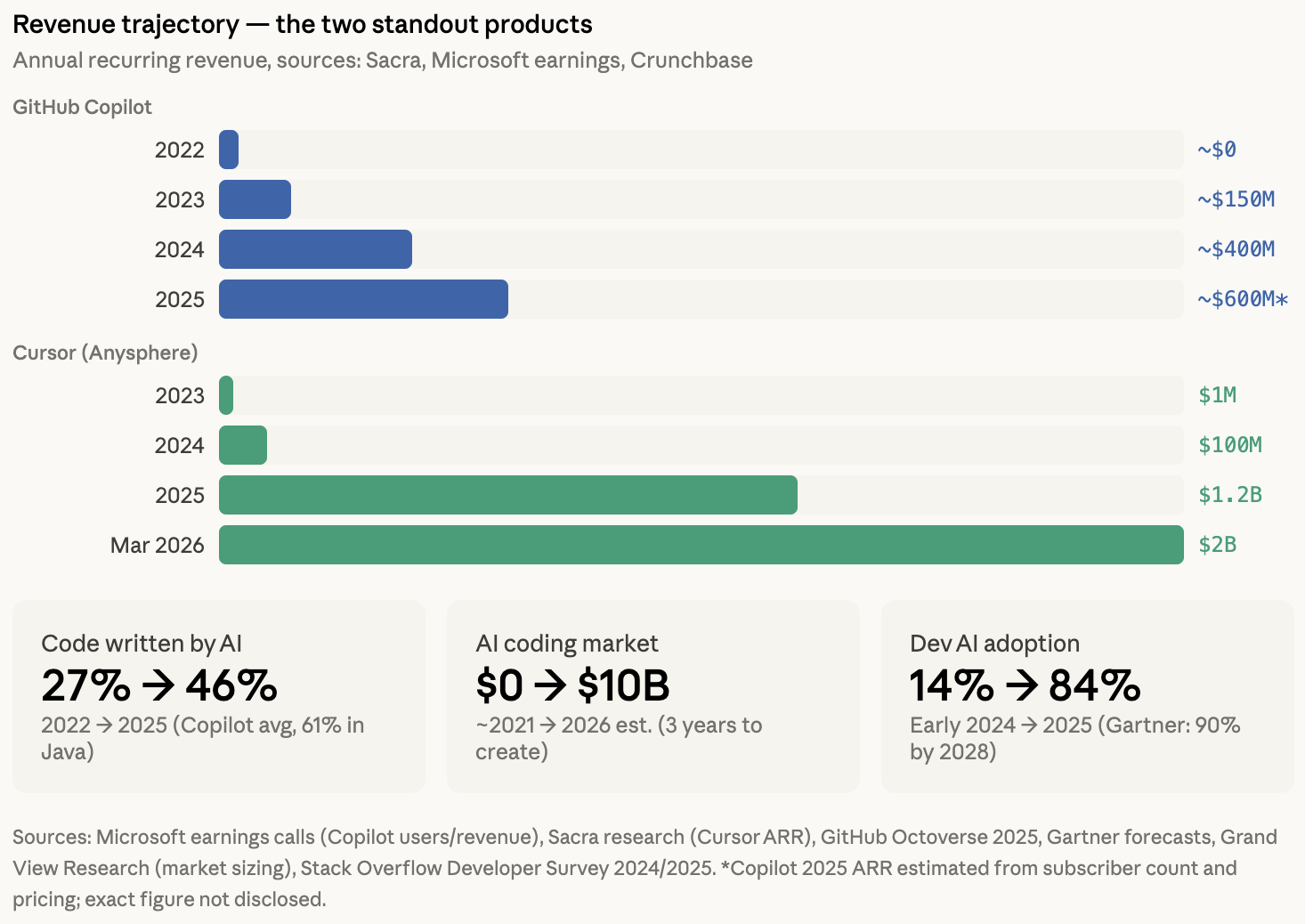

The early days were promising but rough. The first effective tool most engineers encountered was GitHub Copilot, a product that actually predated the generative AI wave and quickly adopted next-token prediction models to offer a smarter IntelliSense. It could auto-complete the next line or few lines of code. Useful, but limited. You still did the thinking. The AI just typed faster.

Then the landscape shifted. Cursor built an entire editor around a fundamentally different interaction model: you could talk to your codebase, query it in natural language, and get answers that were aware of your full project context. Windsurf, launched by Codeium in late 2024, introduced agentic workflows as the first self-described agentic IDE. Claude Code and Codex arrived almost simultaneously in early 2025, pushing things toward autonomous, multi-step reasoning over entire repositories. Each of these represented a genuine leap, not just an incremental update.

To stay effective, you had to jump. And jump again. Over three years, we have worked with at least fifteen to twenty different tools and models. Not out of curiosity, but out of necessity. The model that was state-of-the-art in March was outperformed by July. The tool that introduced a breakthrough feature in Q1 was matched or surpassed by a competitor in Q2.

The cost most teams underestimate

Here is what rarely gets discussed: keeping up with this evolution is a full-time job. It is not just about swapping one tool for another. Each new model has different strengths, different failure modes, different optimal prompting strategies. Each new tool requires rethinking workflows, re-evaluating guardrails, and re-learning what works.

In 2023, you needed heavy manual oversight and extensive prompt engineering to get decent code generation. Non-reasoning models could handle data retrieval through natural language but fell apart on anything requiring multi-step analysis. By 2025, agentic architectures and reasoning-capable models changed what was possible entirely. But only if you rebuilt your approach to match.

Very few teams have the bandwidth for this. Most companies adopt one tool, learn its quirks, and stick with it even as it falls behind. Others chase every new release without the depth to extract real value. Both approaches leave significant capability on the table.

Now the same thing is happening in operations

Everything described above played out in software development. But we are now seeing the exact same pattern emerge in software operations. AI SRE agents, automated incident analysis, intelligent runbooks, model-driven root cause detection. The tools and approaches are evolving just as fast, and the same treadmill applies.

A perfect example played out in just a few months. In November 2024, Anthropic introduced the Model Context Protocol (MCP), an open standard for connecting AI models to external tools and data sources. By mid-2025, MCP had exploded. Server downloads grew from roughly 100,000 to over 8 million. OpenAI, Google, and Microsoft all adopted it. Thousands of MCP servers appeared. For a moment, it looked like the definitive integration layer for AI agents, including for operations use cases.

Then reality set in. Security researchers found serious vulnerabilities across the MCP ecosystem: seven CVEs in a single month, path traversal risks in 82% of analyzed implementations, prompt injection attacks through tool poisoning. At the same time, developers and SREs started connecting local agents via MCP to production environments, interacting with Kubernetes clusters, cloud CLIs, and infrastructure tooling. Powerful, but risky. MCP effectively escalates the user's own permissions to the model, with limited guardrails.

And now, just a few months later in early 2026, the narrative is already shifting. Many people still talk about MCP as the standard. But among practitioners who are pushing the boundaries, a different consensus is forming: models work best not with custom protocol interfaces but with the interfaces that are already deeply represented in their training data. CLI tools and shell execution. A single MCP server can consume tens of thousands of tokens in schema overhead before a model even starts working. A CLI command costs a fraction of that and leverages knowledge the model already has from billions of lines of terminal interactions in its training corpus.

This shift took months, not years. MCP dominated the conversation throughout 2025. By late 2025, the CLI narrative started gaining traction. By March 2026, it is overtaking MCP in practice among the teams that move fastest. Most people have not caught up yet. This is the treadmill in action, now in operations.

Why operations requires a different setup

Whether engineers use MCP or CLI, the current approach to AI-assisted operations typically means running agents locally. On your own machine, with your own credentials, under your own supervision. For software development, that works. You are writing code, reviewing diffs, running tests. The agent operates in your development environment and you are there to watch it.

Operations is fundamentally different. Incidents do not wait until someone is at their desk. Root cause analysis should not depend on an individual engineer's machine being online. Production access needs organizational controls, audit trails, and permission boundaries, not a developer's personal credentials passed through to a model. And the security risks that are already concerning in development become unacceptable in production: open-ended tool access, privilege escalation, unvetted integrations running against live infrastructure.

Local agent execution simply does not meet the requirements of production operations.

Where Hyground stands out

This is exactly where Hyground comes in. We have spent three years on the AI treadmill, tracking every shift in models, tools, architectures, and integration patterns. We do this so our customers do not have to.

Hyground connects to any large language model. Our architecture is built to adapt, so we can leverage the most powerful model capabilities available at any given moment. When reasoning models unlocked genuine incident analysis, we integrated them. When agentic architectures matured enough for reliable multi-step operations workflows, we adopted them. When the integration landscape shifted from MCP to CLI-native tooling, we were already evaluating and adjusting.

But staying current on models is only half of it. Hyground is purpose-built for operations, which means we solve the problems that local agent setups cannot. We provide curated, security-vetted integrations rather than open-ended tool access. We enforce permission boundaries and runtime controls that prevent privilege escalation. We track CVEs across the agent and tooling ecosystem. And Hyground operates independently of any individual engineer's machine, which means incident analysis, monitoring, and operational tasks run continuously, not only when someone happens to be at their desk.

Our customers do not need to track which model handles root cause analysis best this quarter, or whether the integration layer they built six months ago is already a security liability. We do that. We evaluate, we benchmark, we adjust, and we ship the improvements directly into the platform.

The bottom line

The pace of AI development is not slowing down. The treadmill that has been running in software development for three years is now running in operations. The organizations that extract real, compounding value from AI in their operational workflows will be the ones that either invest heavily in staying at the frontier, or partner with someone who already does.

Want to know how we stay on top of the AI evolution and what that means for your software operations? We are always happy to talk. Reach out if you want a deep dive into our approach, a conversation about what is working in AI-assisted operations today, or just to exchange ideas. We are open.

The latest from our team

Explore stories on DevOps, AI, and enterprise security

Ready to transform your operations

See how Hyground reduces incident response time and strengthens your security posture