Why 87% of Your Prompt Isn't Your Prompt

When OpenAI introduced function calling in June 2023, it felt like the missing piece for building useful AI agents. Finally, LLMs could interact with the real world. But anyone who shipped production systems quickly learned the truth: it was finicky. You had to manage the tool call loop yourself, handle errors gracefully, and hope the model picked the right function from your carefully crafted definitions.

Then came MCP.

In November 2024, Anthropic open-sourced the Model Context Protocol: a universal adapter for connecting LLMs to external systems. Instead of building N×M custom integrations (N applications × M data sources), you build N+M: each application implements the MCP client once, each tool implements the server once, and everything interoperates.

Within a year, MCP achieved something rare: cross-competitor adoption. OpenAI, Google, and Microsoft all support it. SDKs exist for Python, TypeScript, Go, Rust, and more. The community has built thousands of servers covering everything from GitHub to Salesforce to local filesystems.

The MCP Protocol

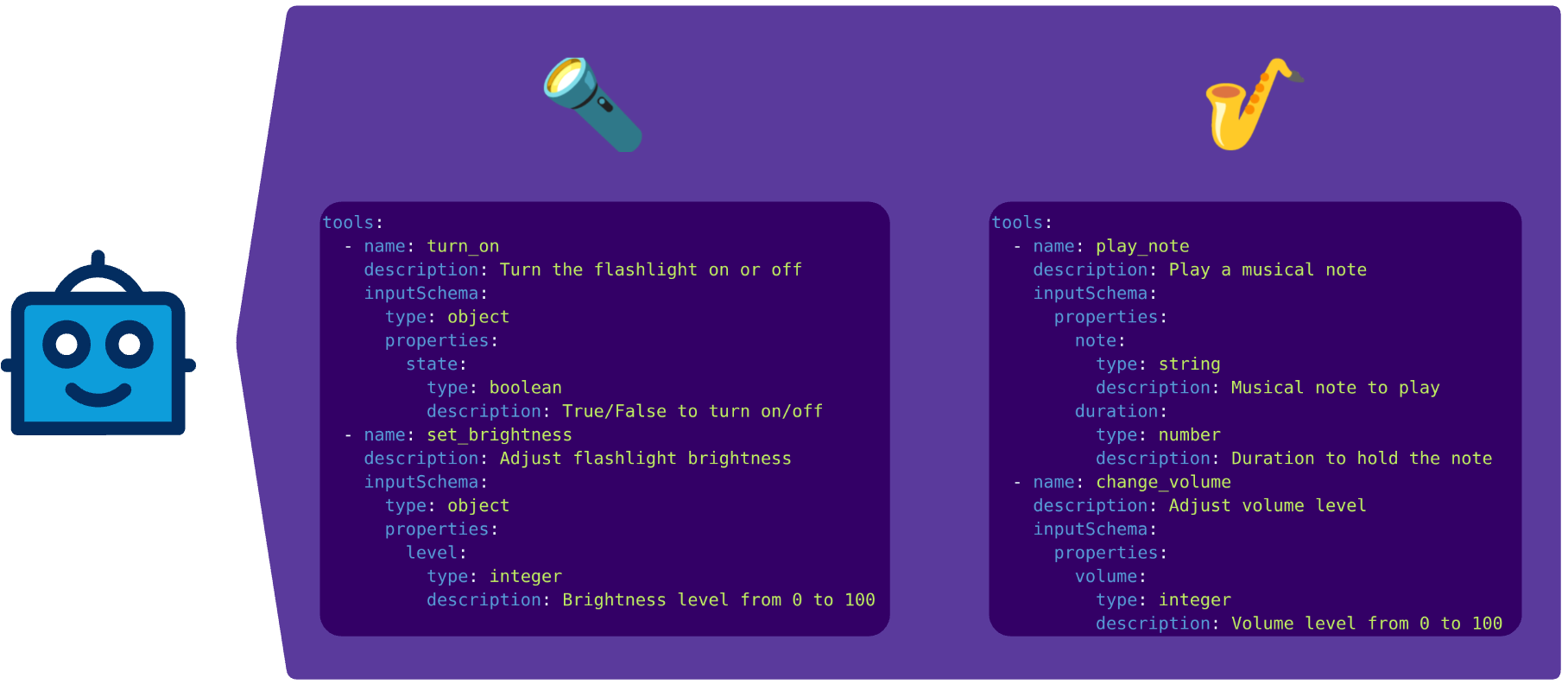

MCP servers allow LLMs to discover and use tools at runtime. The image below shows the MCP tool specification.

For every tool, you get a description and information about the input- and output-formats. The LLM uses this information to select the appropriate tool for the current task.

This allows you to dynamically implement tools and provide them to the LLM. But while MCP solves the problem of tool discovery, it introduces a new one: context composition.

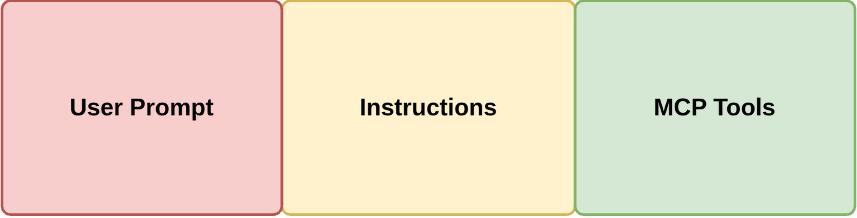

Here's what happens when you invoke an MCP-enabled agent: the context window gets composed of three parts: The user prompt, your system instructions, and all connected MCP tool definitions.

At Hyground, we connected our AI Ops agents to the tools they needed: log and metric analysis, documentation integration, infrastructure provider integration, and more.

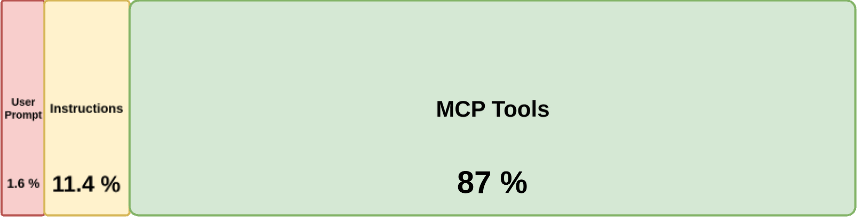

The 87% context that is not your prompt

87% of our context was MCP tool definitions. 11.4% was instructions. The user's actual prompt? 1.6%.

This isn't a Hyground-specific problem. The MCP specification requires all tool definitions to be loaded upfront. There's no native mechanism for semantic filtering or lazy loading. Every connected server dumps its full schema into context before the LLM sees a single user token.

The consequences compound. Major clients have imposed hard limits: Cursor caps at 40 tools, GitHub Copilot at 128. These caps exist because LLM performance degrades when selecting from large, flat tool lists. The model wastes attention on irrelevant tool descriptions, and intermediate tool results further bloat the context.

The Solution: Dynamic tool discovery

The industry is converging on a pattern: don't load all tools upfront. Instead, give the agent a discovery mechanism.

The idea is straightforward: instead of injecting every tool definition into context, provide a discovery-tool that can query what's available. The agent first discovers which servers and capabilities exist, then selectively loads only the schemas it needs for the current task.

Anthropic recently published their approach: present MCP servers as code APIs with TypeScript wrappers. The agent discovers tools by exploring a filesystem, reads only the definitions it needs, and processes results in an execution environment before returning to the model. Their reported result: context usage dropped from 150,000 tokens to 2,000—a 98.7% reduction.

Cloudflare arrived at the same insight independently, calling it "Code Mode". Their insight: LLMs have seen millions of lines of real TypeScript in training, but only synthetic examples of tool calls. Wrapping MCP tools as TypeScript APIs lets the model leverage that deep familiarity.

MCP solved the right problem—standardizing how AI systems connect to tools. But the current architecture has a scaling ceiling that becomes painfully obvious in production. If you're building MCP integrations today, measure your context composition. You might be surprised how little of your prompt budget actually goes to the prompt. Consider implementing layered discovery patterns rather than flat tool loading.

The good news: the community is actively working on this. Proposals for hierarchical tool management and lazy loading are under discussion. Until then, measure your context—you might find that 87% of your prompt isn't your prompt either.

The latest from our team

Explore stories on DevOps, AI, and enterprise security

Ready to transform your operations

See how Hyground reduces incident response time and strengthens your security posture